Look, if you aren’t looking at Bun AI performance vs Python for your stack in 2026, you’re basically choosing to work in slow motion.

Python has been the king of AI forever. We get it. It’s got the libraries, the community, and the massive weight of inertia. But let’s be real: Python’s runtime is a dinosaur. It’s slow, it’s clunky, and managing environments with pip or conda feels like doing taxes while someone screams in your ear.

Enter Bun.

Since Anthropic swallowed Bun late last year, the game has shifted. We aren’t just talking about a faster way to run JavaScript. We’re talking about a runtime built for the era of agentic AI, where every millisecond of latency in your tool-calling loop actually matters.

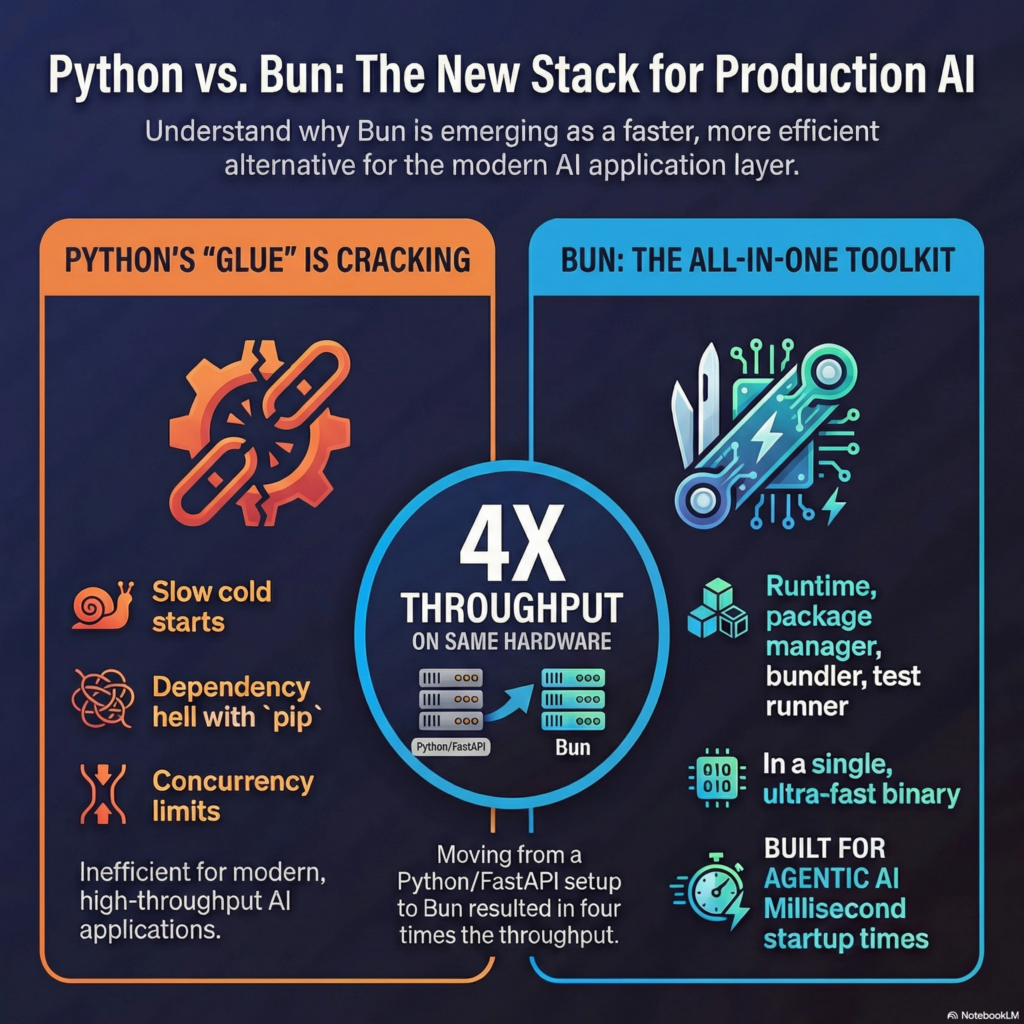

Why the Python “Glue” is Cracking for Modern Agents

Everyone says Python is just “glue” code for fast C++ kernels. That was a fine excuse in 2022. But in a world where AI agents are spinning up thousands of micro-tasks, that glue has become thick, sticky mud.

- Cold Starts: Python takes forever to boot. If you’re running serverless AI functions, you’re paying for that lag.

- The Dependency Hell:

requirements.txtis a joke. We’ve all spent three hours fixing a broken Torch installation. - Concurrency: Python’s Global Interpreter Lock (GIL) is still a nightmare for high-throughput APIs compared to what we’re seeing in the Bun AI performance vs Python benchmarks.

Bun AI Performance vs Python: The “Fast Forward” Button

Bun is written in Zig. It uses the JavaScriptCore engine (the Safari stuff). It’s lean. It’s mean.

I recently moved a production inference wrapper from a FastAPI/Python setup to Bun + Elysia. The difference? Total insanity. We’re talking 4x the throughput on the same hardware. When you look at Bun AI performance vs Python, the startup time alone, which Bun handles in milliseconds, makes Python look like it’s stuck in 1995.

Anyway, here’s why the switch actually works:

- Native TypeScript: No more

tsc. No morets-node. You just runbun index.tsand it works. AI models love writing types; Bun loves running them. - The All-In-One Death Star: Bun isn’t just a runtime. It’s the package manager, the bundler, and the test runner. I deleted

jest,webpack, andnpmfrom my repo. One binary to rule them all. - FFI that Doesn’t Suck: Bun’s Foreign Function Interface (FFI) is roughly 2-6x faster than Node-API. It lets you call native C or Rust libraries with almost zero overhead. If you need raw math performance for your tensors, you still get it without the Python tax.

The Anthropic Factor and Bun AI Performance vs Python

But here’s the real kicker: Anthropic didn’t buy Bun just for fun. They’re baking Claude directly into the workflow.

With things like Claude Code, the AI isn’t just suggesting snippets; it’s managing the runtime. Because Bun starts in milliseconds, an AI agent can run a test, catch a bug, and deploy a fix before you’ve even finished your first sip of coffee. When evaluating Bun AI performance vs Python, you have to consider this “agentic loop” speed. Python’s overhead kills the flow.

Is it perfect?

Hell no. If you’re doing heavy scientific data crunching with Pandas or obscure BioPython stuff, stay where you are. The ecosystem isn’t 100% there yet. But for 90% of AI application layers, the agents, the RAG pipelines, the API wrappers, Bun is eating Python’s lunch.

So, what now? Stop clinging to the “standard” because it’s safe. Try running your next agentic script in Bun. Your CPU (and your sanity) will thank you.

Would you like me to help you draft a migration plan for a specific Python AI script?

Read More: Stop Paying for Tokens: Unlock the Secret AI Already Inside Your Browser – Afashah